Our UniSER removes multiple challenging soft effects and shows zero-shot generalization ability while preserving identities.

Our UniSER removes multiple challenging soft effects and shows zero-shot generalization ability while preserving identities.

Digital images are often degraded by soft effects such as lens flare, haze, shadows, and reflections, which reduce aesthetics even though the underlying pixels remain partially visible. The prevailing works address these degradations in isolation, developing highly specialized, specialist models that lack scalability and fail to exploit the shared underlying essences of these restoration problems.

Leveraging the common essence of soft effects, i.e., semi-transparent occlusions, we introduce a foundational versatile model UniSER, capable of addressing diverse degradations caused by soft effects within a single framework. Our methodology centers on curating a massive 3.8M-pair dataset to ensure robustness and generalization, and a tailored training pipeline that fine-tunes a Diffusion Transformer to learn robust restoration priors. This approach confers three key advantages:

To equip UniSER with robust generalization, we curated a comprehensive dataset by unifying pixel-aligned image pairs from four representative tasks: lens flare, shadow, haze, and reflection removal. We expanded training data through real-world captures, 2D synthesis, and 3D rendering. This includes generating the HALO dataset via rendering 70K paired images for realistic flare effects , curating the Large Real-world Shadow Removal Dataset (LR-SRD) with 26K photo pairs , and using a physically motivated atmospheric rendering pipeline to synthesize highly realistic haze, yielding large-scale datasets like SYN-HAZE containing 24×70K image pairs.

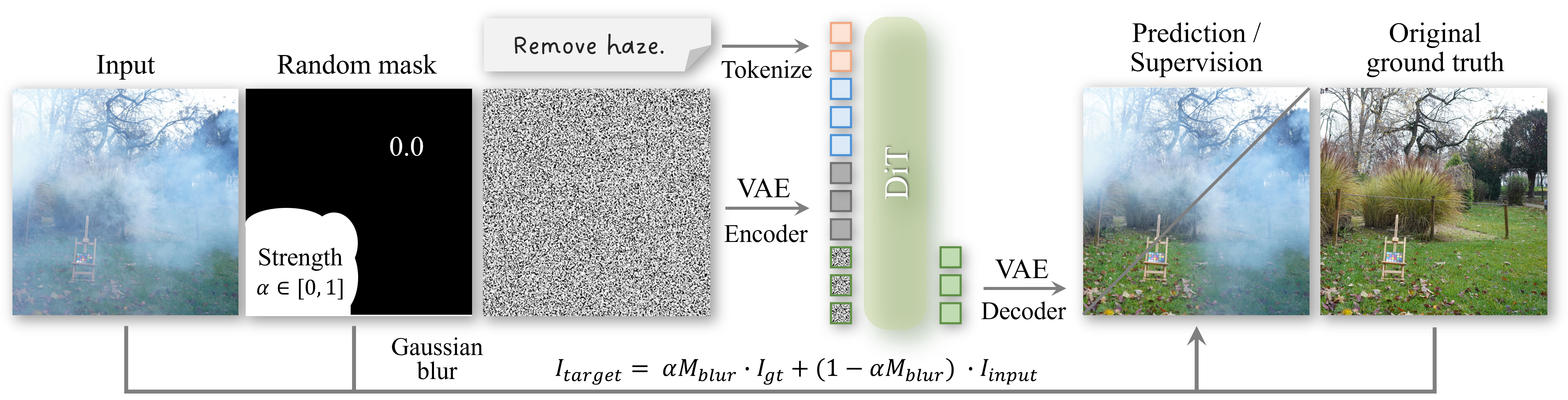

UniSER's core architecture reformulates diverse soft-effect tasks as a problem of discontinuous frame generation within a latent diffusion model. During training, we adopt a random masking strategy to robustly handle user-provided mask shapes. Furthermore, UniSER achieves Removal Strength Control by training the model to interpret continuous values in the conditional mask as an indicator of removal intensity.

UniSER effectively removes a wide range of soft effects while remaining highly faithful to the original image content, producing clean and content-consistent results. It significantly outperforms both specialist baselines and powerful generalist models like Nano Banana and FLUX Kontext.

UniSER supports precise, localized editing through mask-based control and allows users to specify a continuous strength value for smooth transition from partial reduction to complete effect removal. Additionally, by inverting the process, our model can realistically add new soft effects to clean images or enhance existing ones. It also exhibits strong zero-shot generalization to novel degradations like rain and stains. More results please refer to the demo video.

@article{zhang2025uniser,

title={UniSER: A Foundation Model for Unified Soft Effects Removal},

author={Zhang, Jingdong and Zhang, Lingzhi and Liu, Qing and Chiu, Mang Tik and Barnes, Connelly and Wang, Yizhou and You, Haoran and Liu, Xiaoyang and Zhou, Yuqian and Lin, Zhe and others},

journal={arXiv preprint arXiv:2511.14183},

year={2025}

}